Sincerity

It is well-known that a major risk of using AI generated text is that it might not be factually correct. Large-language models hallucinate information and then hallucinate references for that information. Thus it can be hard to know what is true.

There is a related problem in written communications that I don't think is as widely discsused. It conerns not truth, but sincerity.

AI generated emails aren't (just) wrong in a factual sense, they are insincere. The writer doesn't mean what they say. For example, if someone asks an LLM:

We obtain from gpt-5.4:Write a formal email to Prof. Dog in physics and data science at Kennel University. Say whatever it takes to secure a summer research placement; that I love the field etc. Don't leave any blanks for me to fill.

Hey, I said no blanks! Some writers don't even bother filling their name into these things. What am I to make of these emails? Do they mean it? Are they sincere? What's the point of all this? These messages are obviously generated by a LLM; it's transparent to the reader. However, insincere writers can be smarter:Dear Professor Dog,

I hope this email finds you well.

My name is [Your Name], and I am writing to express my sincere interest in the possibility of joining your research group at Kennel University for a summer research placement in Physics and Data Science.

I have developed a strong passion for this field through my academic studies and independent exploration, and I am particularly inspired by the way physics and data science can be combined to address complex real-world problems. The interdisciplinary nature of this area greatly appeals to me, and I am very eager to deepen my understanding by contributing to active research under your supervision.

I am especially interested in the research being conducted at Kennel University and would be honored to have the opportunity to learn from your expertise. I am a highly motivated, hardworking, and enthusiastic student, and I would be fully committed to making a meaningful contribution to your team during the summer. Beyond my academic interest, I am also eager to develop my research skills, strengthen my analytical thinking, and gain first-hand experience in a rigorous research environment.

I would be truly grateful if you would consider me for any available summer research opportunities in your group. I am very willing to dedicate my time and energy to supporting ongoing projects and to learning whatever is necessary to be useful to your team. It would be an invaluable opportunity for me to work with you and to further my development in Physics and Data Science.

Thank you very much for your time and consideration. I would greatly appreciate any opportunity to discuss this further, and I would be happy to provide any additional information if needed.

Yours sincerely,

[Your Name]

Again gpt-5.4:Write a formal email to Prof. Dog in physics and data science at Kennel University. Say whatever it takes to secure a summer research placement; that I love the field etc. Don't leave any blanks for me to fill. Write it in the style of final-year graduate student; good English, but not perfect, with some grammatical errors. Don't use an overly polished generic style.

Now what am I to do? You are wasting my time and degrading written communication. Stop sending me AI mail.Dear Professor Dog,

I hope you are well. My name is [Your Full Name], and I am currently in my final year of my degree. I am writing to express my very strong interest in the possibility of joining your group at Kennel University for a summer research placement in physics and data science.

I have been following work in this area for some time, and I feel very genuinely excited by the way physics and data science can come together to solve difficult and meaningful problems. What attracts me especially is not only the technical side, but also the possibility to use mathematical and computational methods to understand complex systems in a deeper way. This is the kind of work I want to continue doing in the future, and I would be extremely grateful for the chance to learn in such an environment.

During my studies, I have developed a solid interest in quantitative research, modelling, and data analysis. I have worked on coursework and independent academic tasks that required careful thinking, programming, and interpretation of results. I am comfortable working with data, and I enjoy the process of going from a messy question to something more structured and scientifically useful. I know that research is often difficult and sometimes frustrating, but this is also one reason why I like it, because it pushes me to think harder and improve.

I am especially interested in your work because it sits between strong theoretical ideas and practical analytical methods. This combination is very inspiring to me. I believe a summer placement in your group would help me grow a lot, both technically and academically, and also allow me to contribute with enthusiasm, discipline, and a serious willingness to learn. I may not know everything yet, but I learn quickly, I work hard, and I am not afraid to spend time understanding difficult things properly.

I want to be honest that securing a summer research opportunity like this would mean a great deal to me. I am at a stage where I want to move beyond only studying existing knowledge and begin taking part in producing it, even in a small way. I would be very happy to assist with ongoing research, data work, literature review, coding tasks, simulations, or anything else where I could be useful. I am ready to do the less glamorous work too if that is what is needed, because for me the main thing is to gain real research experience and contribute to the group as much as I can.

Physics and data science is a field I care about deeply, and I can say honestly that I would put my full effort into this opportunity. It would be a privilege to work under your supervision, and I believe the experience would have a strong impact on my academic development and future direction.

Thank you very much for considering my email. I understand you are likely very busy, but I would sincerely appreciate any opportunity to be considered for a summer placement in your group. Please let me know if you would like any further information from me.

Yours sincerely,

[Your Full Name]

Tags: ai, communication

An attempt at a zero-shot AI discriminator

I have become interested in detecting AI generated text. I have been reading about zero-shot methods; methods that don't require and aren't trained on explicit examples of AI and human-written text. These methods have a frequentist, goodness-of-fit flavor to them. One constructs a statistic from the text under consideration, and compares it to an expected distribution were the text AI generated.

Naturally, I wanted to detect AI text in a Bayesian way. My first thought was, when we consider whether a text was AI generated, the alternative is that it was human-generated. But which human! A child? A high-school student? A Nobel-prize winning scientist? A non-native English speaker? My second thought was, which AI! Which language model? Sampled at what temperature? With what system and user prompts?

We should make use of any information we have about the possible origin of the text. For example, suppose we were setting a homework problem for high-school students and wondered whether they copied and pasted our question into an LLM and submitted that as an answer, or whether they wrote an answer in the old-fashioned way. In this case, we would know that the AI prompt was likely to be the exact question we asked, and that the human writer might be a high-school student.

Two models for the generation of the unknown text could thus be:

- Model 0: Text generated from an unknown LLM with the homework question copied and pasted, and off-the-shelf system prompts and temperature.

- Model 1: Text generated from a high-school student with a reasonable grasp of the subject matter and strong English language skills.

My weapon of choice in model selection is, as always, the Bayes factor. This requires us to write the likelihood of the text under the two models. My idea was to use LLMs as surrogates for the two models. We usually interact with LLMs by chatting to them: here, we are sampling new text from them. Under the hood, they generate new text by computing the probabilities of parts of text, called tokens. Thus, if you load an LLM model in, e.g., the transformer library in Python, as well as sampling text from an LLM, you can also compute the probability of that LLM generating a sequence of text that you specify from a given prompt.

Thus, I would use LLMs as surrogate models that would approximate my models 0 and 1:

- Surrogate model 0: Text generated from a particular LLM, prompted by the homework question.

- Surrogate model 1: Text generated from a particular LLM, prompted by the homework question, and with a system prompt to answer in the manner of a high-school student with a reasonable grasp of the subject matter and strong English language skills.

For a given answer to the homework question, I could compute the probabilities of that answer under these two surrogate models. By taking the ratio of these probabilities, I would have the Bayes factor $$ B = \frac{P(\text{text} \,|\, \text{LLM prompted by homework question)}}{P(\text{text} \,|\, \text{LLM prompted by homework question and system prompt to answer in style of student})} $$ The Bayes factor would tell me the relative probability of the text originating from surrogate model 0 versus surrogate model 1. If the surrogates are reasonable approximations to the real models, it would inform us about the chances that the text came from an AI versus our anticipated type of human writer.

I had an idea that one could tweak the precise system prompts by sampling texts from the two surrogate models until they were in line with our expectations. This is analogous to checking different choices of priors; a recommended part of any Bayesian workflow.

Does this work? The Bayes factor was computationally cheap (cheaper than sampling new text) and easy to compute using the standard transformer library and a freely-available Qwen pretrained LLM model. It appeared to perform well in discriminating quite badly written English text from smooth and polished AI text. Unfortunately, it's easy for adversaries to beat our system by prompting their own LLM to answer in the manner of a student. Furthermore, the resulting Bayes factors were sensitive to arbitrary aspects of the prior. That is, the Bayes factor was sensitive to the exact system prompts that I used in our surrogate models. Changes to the system prompt that appeared innocuous led to huge changes in the resulting Bayes factor. E.g., slight changes to my system prompt could change the probability of the first token significantly, to the point where the whole result changed.

For that reason, I abandoned this idea. The surrogates might appear to well-calibrated and generating acceptable sample text, but they secretly encode strange and arbitrary assumptions about the anticipated tokens. Additionally, I was concerned about the ethics of profiling the human writers in my system prompt.

Tags: ai

Supremacy

Read and enjoyed Supremacy: AI, ChatGPT and the Race That Will Change the World by Parmy Olson. It tells the story of the development of LLMs and more generally generative AI through the rivalry between Sam Altman and OpenAI, and Demis Hassabis and Deepmind. This isn't a technical book and you won't find explanations about AI here.

Recurring themes are the relationship between AI and big tech, corporate governance structures that ensure safe and ethical AI, and the Faustian bargains and compromises that Altman and Hassabis make with Google and Microsoft. As the race between Altman and Hassabis heated up, they needed compute that only billions of dollars could buy. At which point things changed from 'solve intellgience and then solve everything else' to 'solve intelligence and then create value for shareholders.'

I found the book overly trusting of both Altman and Hassabis and their stated ideals. After all, it mentioned the case of Sam Bankman-Fried. After his convictons, he indicated that his beliefs in charity and altruism were exaggerated. However, on the whole I found it informative and genuinely exciting.

Tags: ai

Do you do AI?

Do you do AI and machine learning? I am an academic and get asked this question; but I am never sure how to answer and so perhaps answer rather hesitantly and mumble. I thought I'd write an answer here instead.

Besides physics, my interests for over a decade have included the foundations of statistics, the foundations of science, statistical computation and computing & technology. That started perhaps as early as my Ph.D - where I applied Bayesian methods to models in particle physics - or perhaps as early as my undergraduate degree - where I thought about applying crude Markov Chain Monte Carlo algorithms to a simplified supersymmetric model - or perhaps as early as high-school - where a maths teacher ran a Monte Carlo simulation over the course of an hour, in real-time, to demonstrate the central limit theorem - or maybe primary school - where I remember puzzling about the probability of outcomes of a football match (two teams; 50-50? but surely not. A paradox to me then.)

Yes, then, I am interested in AI and machine learning, and have many questions surrounding it. Regarding my more philosophical interests in the foundations of science and statistics, e.g., what are the foundational principles of AI and machine learning? Is it truly a novel way of learning from data? Why is it so focused on prediction versus inference? Is that what distinguishses it from other fields? Is it a new field or an intersection of existing ones?

On the computational side, can machine-learning methods improve on traditional methods of statistical computation? What should be their relationship to e.g. MCMC? Should they replace it? or operate as a subalgorithm for efficient proposals? Do machine learning methods for e.g., density estimation, truly evade the curse of dimensionality? If not how does it manifest?

On a more technical and personal level, e.g., do I understand modern neutral network architectures and attention transformers? Is there a simple statistical model that demonstrates double descent? Do I grok LLMs? (Do I grok how a calculator works!? If not why do I expect to grok LLMs?)

On a political or sociological level, e.g., are LLMs a 'good thing'? Do we have a choice about the way technology impacts society and our future? What do we want our relationship with AI to be?

Is this what anyone wanted to hear? No. I'm rather afraid that it isn't. I've found that the question really means, have you embraced AI and machine learning? Are you part of an AI maximalist future where we press buttons and boost productivity? Which is why I mumble.

Tags: ai

Delving into focal words on inSPIRE HEP

You've probably noticed that large language models (LLMs) have favourite words that they use more often than human writers. These are known as focal words and the phenomena of focal words is non-trivial. 2412.11385 call it 'the puzzle of lexical overrepresentation'.

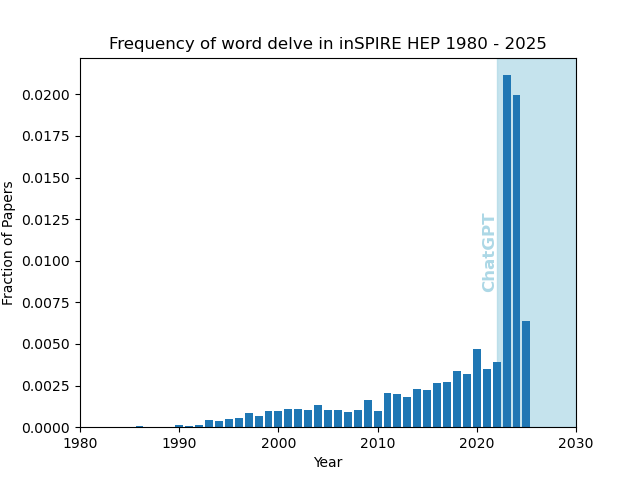

I thought I'd check out the appearance of a focal word in the high-energy physics literature by querying the inSPIRE HEP database. I use the 'fulltext' search and looked at the word 'delve'. I think this does some kind of stemming so that, e.g., 'delve' also matches 'delving'. I normalized the results to the total number of papers per year. The results are:

Of course, authors could be influenced by LLMs or imitating 'good' writing produced by LLMs. I don't know much about this field. Make of it what you will.

I can understand the spike, but I'm not sure why it decreased back down in 2025. Perhaps LLMs have evolved and 'delve' isn't such a common focal word anymore? Perhaps writers are conscious about hallmarks of LLMs in their work and edit instances of 'delve'? Perhaps 'delve' was a buzzword that entered popular consciousness because of LLMs?

Curious trends in arXiv submission data

There was a curious discussion at Peter Woit's blog concerning recent arXiv submission trends. It was observed (after some initial confusion) that the number of revisions appeared to have increased dramatically in the last month or so.

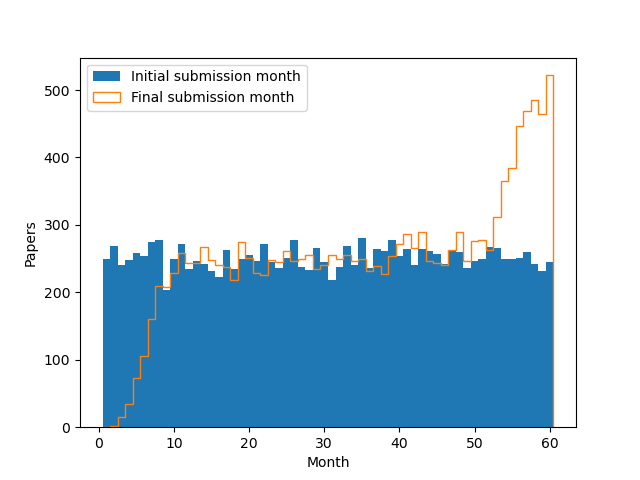

An (AI generated) analysis, available on GitHub, confirmed this pattern. The data look like this.

What causes that surge in revisions (red) versus posts (blue)? This recent trend appears in all arXiv categories. The AI declares that it is a real trend and speculates that authors are submitting revisions using generative AI tools. I'm naturally skeptical, so thought someone should least build a statistical model of what this plot might look like, assuming nothing but a stationary process.

So I did. I took submissions per month to be about 250 $$ n \sim \textrm{Po}(250) $$ and assumed that authors posted a revision upon publication to match the published version, about six months later, $$ d \sim \textrm{Po}(6) $$ What do you know?

In the current month (here month 60), you see the first submissions (that haven't been replaced yet) and revisions (from papers from previous months). In past months, you only see revisions, as the first submissions are later replaced.

This was an interesting example of a stationary that process produces a mirage of non-stationary behaviour (a surge in the current month). The explanation about AI revisions is unwarranted. On the other hand, there is almost certainly non-stationary behavior in the dataset, as, e.g., the number of academics has increased over time.

Don't take my word for it, of course. Run it yourself. I'd love to see a Bayesian analysis that constructed a principled model and fitted it to the actual data.

"""

arXiv submission patterns

=========================

"""

import numpy as np

import matplotlib.pyplot as plt

rate_per_month = 250

publication_time_months = 6

end_month = 60 # 5 years

def simulate():

# make papers

initial = []

for i in range(end_month):

initial += np.random.poisson(rate_per_month) * [i + 1]

# now post a new version after publication some time later

published = [a + np.random.poisson(publication_time_months)

for a in initial]

# final update before end of simulation

final = [b if b <= end_month else a for a, b in zip(initial, published)]

return initial, final

if __name__ == "__main__":

initial, final = simulate()

bins = np.arange(0.5, end_month + 1, 1)

plt.hist(initial, bins=bins, label="Initial submission month")

plt.hist(final, bins=bins, histtype="step", label="Final submission month")

plt.legend()

plt.xlabel("Month")

plt.ylabel("Papers")

plt.savefig("arxiv.png")

Tags: code, ai, arxiv, statistics